One of the more interesting features of the new OM System ‘Olympus’ OM-1 camera is its ‘quad-Bayer’ sensor design. This article explains what this means, and what benefits and demerits it brings.

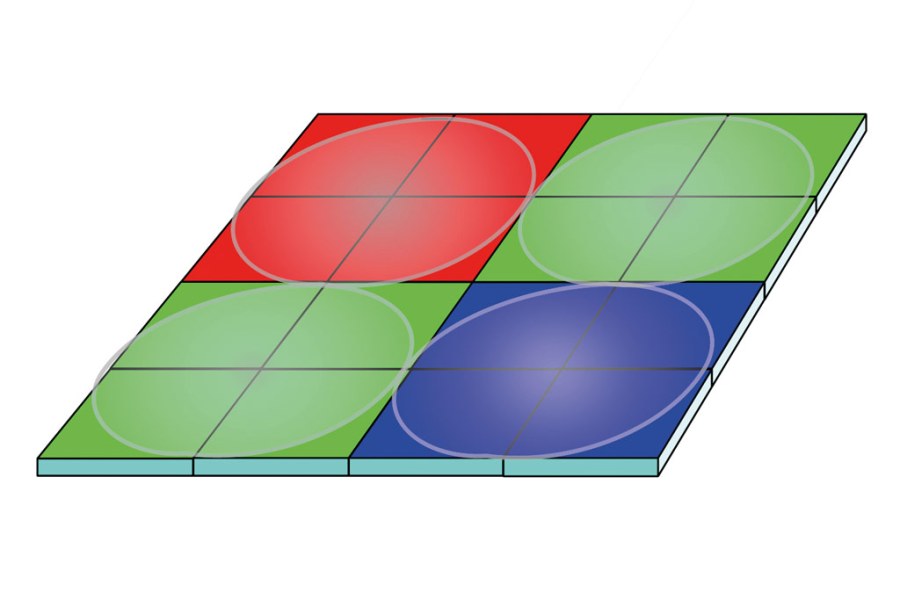

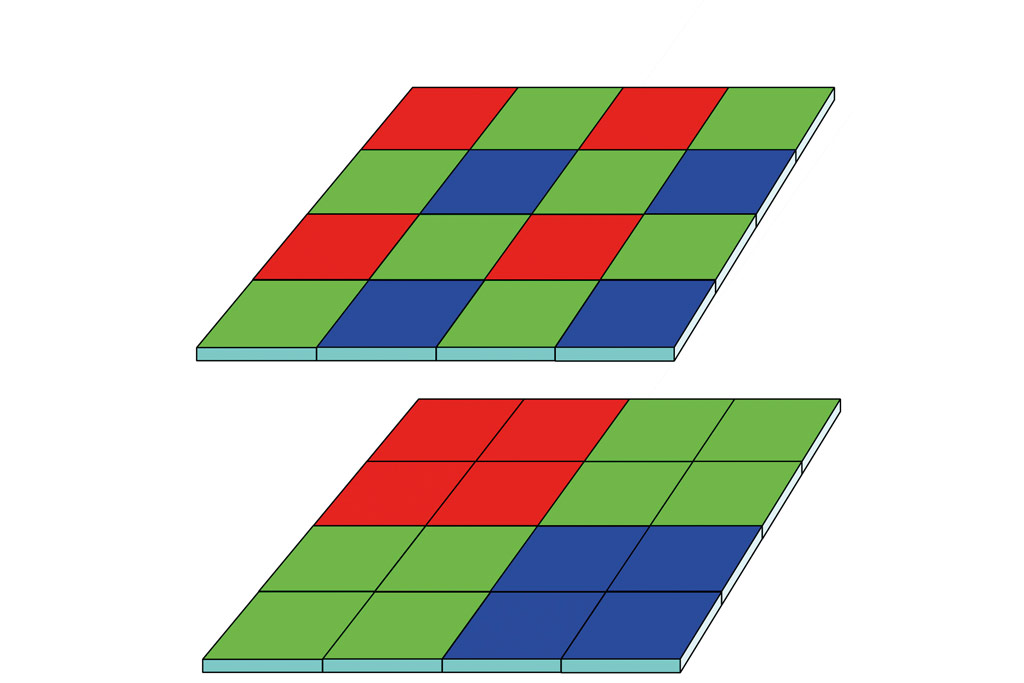

Essentially, the crux is the use of a Bayer colour filter array in which each ‘red’, ‘green’ or ‘blue’ filter patch covers four pixels, rather than one. This is illustrated in the figure below, which shows a normal Bayer array compared with the quad-Bayer alternative. At this stage we need to clarify some terminology. Although many manufacturers label the pixel as a ‘photo-diode’, each of the four pixels under one colour filter is fully functional and capable of being read out independently from the others. Indeed, there would be little point in the design were this not the case. So, at first sight it appears that the only effect of the quad-Bayer arrangement is to quarter the resolution of the sensor. Thus, the 20MP sensor in the OM-1 actually has 80 million pixels. Later on, we’ll see why this is a good idea.

Figure 1: A normal Bayer sensor (top) has a separate colour filter over each pixel. In a quad-Bayer sensor (bottom), each colour filter covers four pixels

The quad-Bayer sensor arrangement is quite common in phones. You may wonder how a phone lens can ever project enough resolution to justify up to 100MP on the sensor. The answer is that it can’t, however using a small pixel aperture can increase resolution. In a phone, the quad-Bayer arrangement allows all four pixels to be combined in low light. In bright light the pixels are used individually, thus reducing the effective pixel aperture and increasing sharpness.

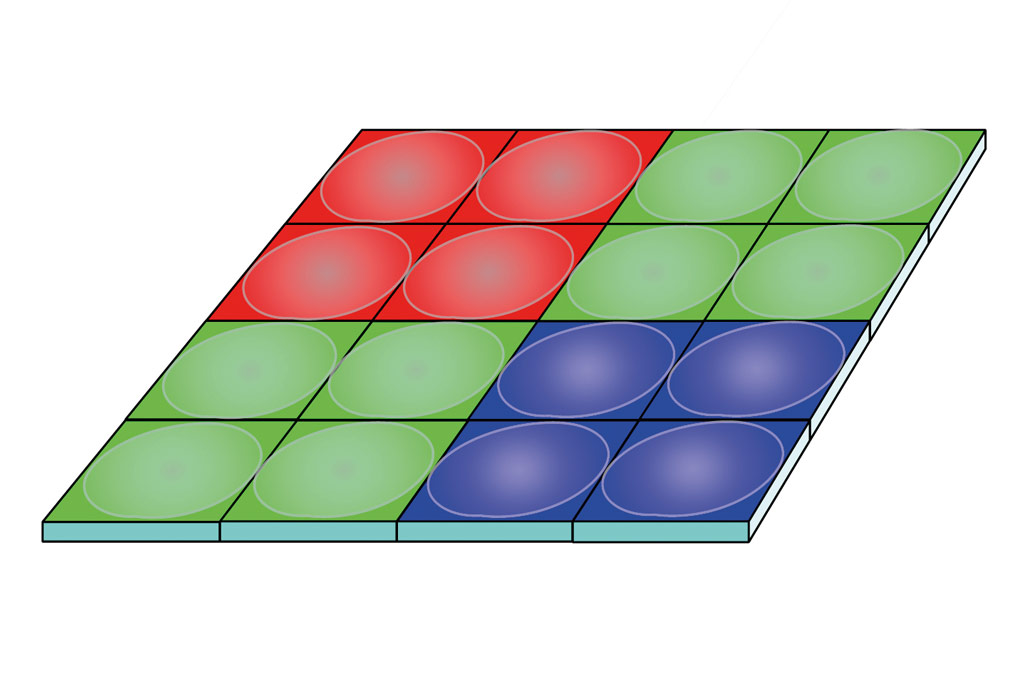

The first consumer cameras to use this arrangement have been the low pixel-count, video-specialised Panasonic Lumix GH5S and Sony Alpha 7S III. These cameras do not include the function described above, so what is the advantage of the quad-Bayer arrangement? Both these cameras use the arrangement shown in figure 2, with a separate microlens over each individual pixel. This maintains the efficiency of the microlens in focusing light onto the active area of each pixel. However, this design gains nothing over using a larger native pixel, and the real reason for its use lies in production economics. The low pixel count sensor is a very specialist product, and if it is possible to make it a variant of some other product, then production costs can be reduced. Taking the Sony as an example, its 12MP sensor can be derived from a 48MP sensor, and it’s no surprise that Sony Semiconductor indeed makes full-frame sensors with around this pixel count.

Quad-pixel autofocus

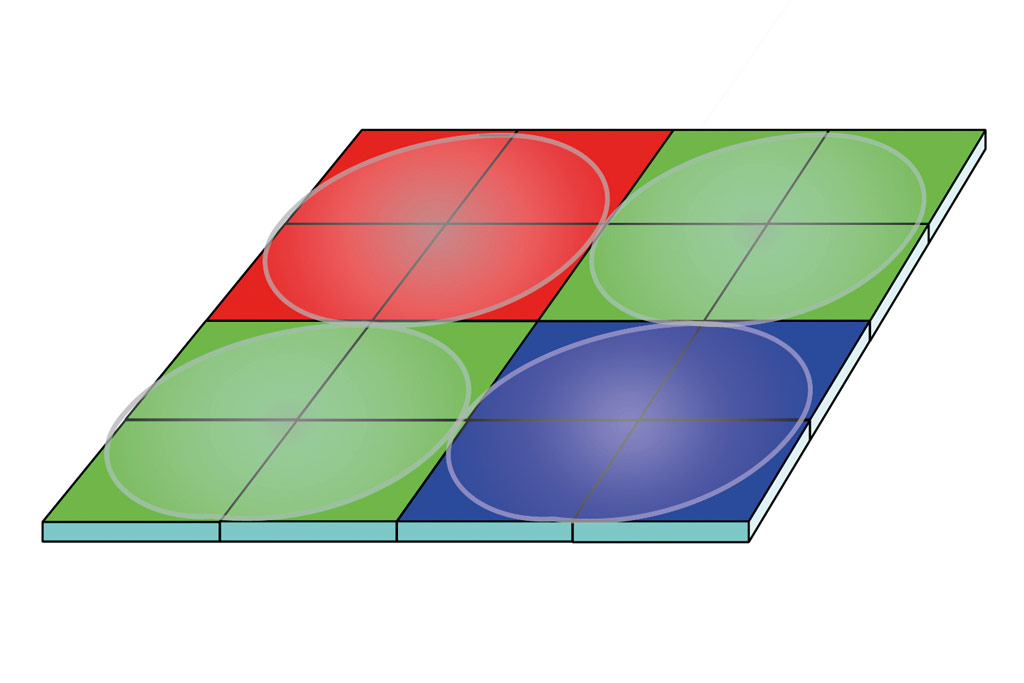

So, now to the OM-1. This uses a different microlens arrangement, shown in figure 3. Here one microlens covers each quad-pixel colour filter. The disadvantage is reduced efficiency, since the microlens also focuses light on non-active parts of each pixel. However, the advantage is that the sensor now provides a phase-difference focus detection mechanism, similar to Canon’s dual-pixel arrangement, but working both horizontally and vertically.

In this sensor design, every pixel in the frame can be used as a focus pixel, allowing ‘cross-type’ detection, and when the frame is captured, no focusing pixels need to be interpolated over. Canon provides a ‘dual pixel raw’ function, which allows depth information to be extracted from the picture. A quad-pixel raw option would be very interesting indeed, but so far OM System doesn’t offer it.

Bob Newman is currently Professor of Computer Science at the University of Wolverhampton. He has been working with the design and development of high-technology equipment for 35 years and two of his products have won innovation awards. Bob is also a camera nut and a keen amateur photographer

Bob Newman is currently Professor of Computer Science at the University of Wolverhampton. He has been working with the design and development of high-technology equipment for 35 years and two of his products have won innovation awards. Bob is also a camera nut and a keen amateur photographer

Further Reading:

APS-C vs full-frame – which sensor size is best?

Follow AP on Facebook, Twitter, Instagram, YouTube and TikTok.